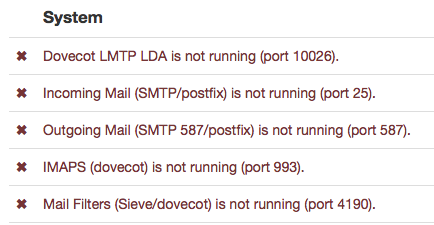

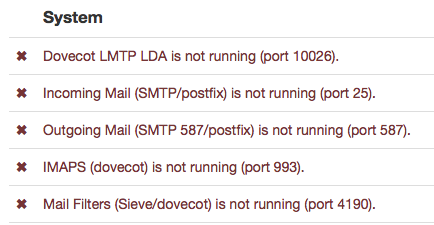

I’ve noticed that important services (postfix, dovecot, seive) are stopped during backups, though the admin UI stays working. For a full backup it means the server is unavailable for a couple of hours. Is this normal?

I’ve noticed that important services (postfix, dovecot, seive) are stopped during backups, though the admin UI stays working. For a full backup it means the server is unavailable for a couple of hours. Is this normal?

I have never seen this … usually my backups take a few minutes … so quick that I haven’t time to notice if any services are stopped during the backup.

Yes, I’d not noticed it before, but I saw it happen by chance, and then when I triggered a manual backup, the same thing happened again - and 40G of email takes quite a long time to back up.

Hi!

Indeed, the services are disabled before a backup is started. See here: https://github.com/mail-in-a-box/mailinabox/blob/master/management/backup.py#L270

In my case, the backup takes a bit longer due to ~150gig of emails and this is how I noticed.

@JoshData, can you shed some light onto why those services need to be disabled during an update?

Best regards

Bastian

I was also noticing getting kicked off unexpectedly when the service gets stopped. This could be annoying if several hundred users are getting logged out all at once every 30 minutes.

I was wondering if there were a simple way of including a check for the number of logged in users. If this were to return a non-zero value, then abort the backup for that cycle. Otherwise, there would be 0 users logged in and the backup could continue without interrupting users.

If so is there a bash way of determining this or is there a python command to perform a simple ‘if’ test of users ??

Thanks,

Richard

To prevent corruption. If a backup is in progress while files on disk are being modified, then the backup might see a file in a half-saved state. It might be safe to keep postfix and dovecot running, but it also might not be safe, so to be on the safe side we turn them off.

Is is possible to add an option that copies the files, backs up the copies and purges the copies (to save disk space)? I mean, Linux does have a file locking system (whatever “lslocks” thing is) so that applications are fighting over who writes to the same file. I mean, are these a lot of small files, or a few big files?

Those are both good questions that I don’t have answers to.

Ya know what is an even better idea? When the backup scripts are initiated, change the config files to point all logging and new writes to another temporary directory/location, restart all services, start backup process, wait for backup process, change configuration of services back to permanent directory, move all files (if any) from temporary directory to permanent directory, and restart services.

I have a question though. Ubuntu Bionic (18.04) is the goal for the next release. When and which branch could we use to throw in features that we want to work on? This seems like a feature we’d put a WONTFIX on for now until we can get the confidence to make the first ubuntu_bionic official release.

This is starting to become a major problem for me. I have so much email data and I don’t want to have to pay amazon (or trust them) or pay for another backup server when I got so much perfectly good storage at home. This backup process across the world to my home server with hundreds of GB can take hours. During this time everything is down, connections error out, the user experience is broken, and I have to pray I am not missing important emails. Any solution would be great.

considered using rsync to back up your mails instead, so you don’t copy the same backup repeatedly?

From your home machine.

rsync -avzh root@box.mybox.com:/home/user-data/mail/ /my/local/backup/folder

You may want to firewall your ssh to allow only your home IP (if your IP is pretty fix) since you open login to remote root access. Of course you can use mailinabox rsync option as well.

Btw, by not cleaning up your mails (especially attachments) and moving them to proper archives, you are already wasting money paying for hundreds of GBs of emails.

In my country, anything more than 7 years are basically meant for cold storage.

Mail servers will try to resend the emails to you after your server down time, so don’t need to worry about service disruption.

I use the built in rsync with ssh keys and dynamic DNS so that is all fine. The problem is miab still shuts down everything during this process. Nothing I have is over 7 years. I pay for a flat amount of storage so I am not wasting anything by using it.

While yes, mail servers are supposed to retry, not all of them do, and it adds delay to getting the mail.

If you are already using rsync then consider using your own backup solution like what i recommended instead of using the build in one.

You can set up a crontab on your box to do the rsync regularly manually. crontab -e

Since rsync checks the file hash for changes, you are unlike to get any corrupted files with the services running compared to s3 backup build in solutions.

nano script.sh

#!/bin/bash

rsync -avzh /home/user-data/mail/ /remote/dir/

make script executable

chmod +x script.sh

set up crontab with sudo crontab -e. You need your cron to run as root. add in the follow cron to run it hourly.

0 * * * * /path/to/script.sh > file 2>&1

PS: these backup files are unencrypted (unlike the build in backup), so you must secure it yourself

Edited A better solution would probably be backing up the encrypted files instead as suggested → Backup question,root as owner of backup file - #16 by alento

I think I am not understanding the problem correctly.

Is your backup process requiring the backup to be saved to an external server while all of the services are down?

Note: the only backup service I have used in MiaB is the default which creates backups in /home/user-data/backup/encrypted.

Well, anyway, the solution I currently use I do not like, and the solution I discovered I thought might work seems not to work, and I’ve tried going back to it every now and then.

The sshd service can be configured to chroot users using ChrootDirectory in sshd_config. What I’ve wanted to do is make a user than only has access to /home/user-data/backup/encrypted/ as the directory and files are all owned by root. That way I can just have a remote server log in, rsync the files, and log out, without fear that anything worse could happen to MiaB.

What I’ve tried to do is make /home/user-data/backup/encrypted/ the home directory of backupuser, but sshd won’t allow it because it throws the following in the logs:

Mar 10 11:40:50 mail sshd[18684]: fatal: bad ownership or modes for chroot directory component "/home/user-data/"

If anyone is interested, here is one article discussing a configuration:

yes the built in rsync backup method turns all the services off while transferring remotely. Even doing the backup locally and then rsync might be better… I can’t have hours of downtime…

I just discovered this isn’t working because of a requirement of the ChrootDirectory option:

At session startup sshd(8) checks that all components of the pathname are root-owned directories which are not writable by any other user or group.

So the only way to make it work is if the encrypted backups are stored somewhere outside the /home/user-data/ directory path. Well, answered a question for myself today. Of course, maybe there is some other way to restrict a user…

interesting read. Will symbolic link of the folder work?

Not for anywhere outside of the chroot directory, so you wouldn’t be able to go from a chroot of /home/user/to anywhere outside of there - it would be like a symbolic link going outside of / for non-chrooted users, I think.

Instead of chroot, why not restrict your SSH port only certain IP? Btw, I believe generating the /encrypted/ folder requires the services to be down as well.