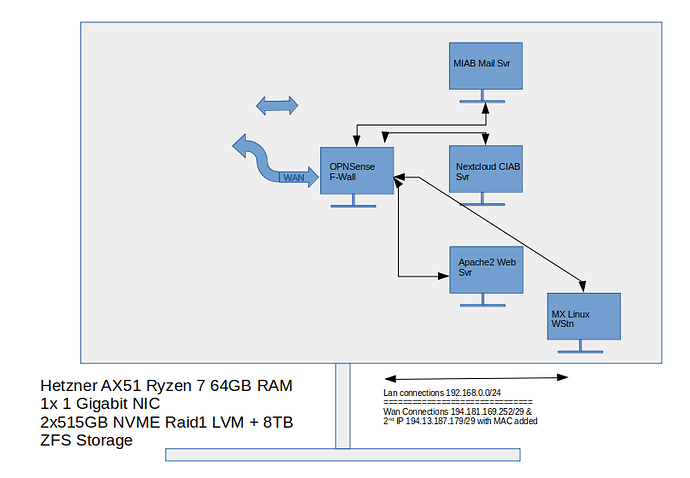

My setup is a baremetal AMD ryzen 7 wit single nic with IP 1 for Public/internet connection + additional IP 2 and MAC address for virtualization.This is configured as a debian 11 Linux server with proxmox and 2 Ubuntu 20.04LTS VM’s for Mail-in-a-box_LDAP (VM1), Cloud-in-a-Box (Nextcloud) (VM2) and a MX Linux (VM3) Workstation for maintenance/testing.

The MIAB_LDAP uses and serves ldap over port 636 to mail users and is designed to provide the CIAB (Nextcloud) with the same ldaps services for nextcloud users so that the two services can usethe same credentials for users on both mail and collaboration/storage services.

The 4th VM in this setup is a OPNsense server with version 20.19. It serves as the gateway and firewall and once working fully may also provide proxy and automated certification services through Let’s Encrypt.

I can access the internet through all VM’s and the Host. But ldaps will not connect between the VM1 & VM2

I am now frustrated and tired after 3 days of failure. There seems no reason for this failure. the default layout is as below in the attachment:

When I issue ~# lsof -i -P

on the mailserver with the ldap database I get 3 ldap readings

slapd 4242 openldap 8u IPv4 33726 0t0 TCP localhost.localdomain:389 (listen)

slapd 4242 openldap 9u IPv4 33728 0t0 TCP *:636 (listen)

slapd 4242 openldap 10u IPv4 33729 0t0 TCP *:636 (listen)

So it seems the ldaps port is open and listening.

As far as the network is concerned thanks for the warning on the IP’s but they are not my real ones.

I have and internal network lan of the range 192.168.0.0/24. Both mail and nextcloud VMs are no that lan and the ufw firewall on the mail/ldaps VM is set allow ldaps from anywhere the ip of the nextcloud/ldap client.

It is true I have one network card with an public IP set on VMBR0

The VMs access the world through VMBR1 using the separate mac address ordered specially for the second IP address bought for proxmox virtualization using a routed configuration similar to :

iface lo inet loopback

iface enp35s0 inet manual

up route add -net X.X.X.X/X netmask 255.255.255.X gw X.X.X.X dev

enp35s0

route X.X.X.X./X via X.X.X.X

auto vmbr0

iface vmbr0 inet static

address <PUBLIC-IP/29>

gateway

bridge-ports enp35s0

bridge-stp off

bridge-fd 0

pointtopoint

bridge_hello 2

bridge_maxage 12

auto vmbr101

iface vmbr101 inet manual

bridge-ports none

bridge-stp off

bridge-fd 0

#OPNSense_LAN1

auto vmbr100

iface vmbr100 inet manual

bridge-ports none

bridge-stp off

bridge-fd 0

#OPNSense_LAN0

auto vmbr1

iface vmbr1 inet manual

bridge-ports none

bridge-stp off

bridge-fd 0

#OPNSense_WAN0 Assigned to MAC Address of 2nd PUBLIC-IP